import math

import torch

from torch import nn

from torch.nn import functional as F

from d2l import torch as d2l

batch_size, num_steps = 32, 35

train_iter, vocab = d2l.load_data_time_machine(batch_size, num_steps)

X = torch.arange(10).reshape((2, 5))

print(X)

print(F.one_hot(X.T, 28).shape)

# 初始化循环神经网络模型参数

def get_params(vocab_size, num_hiddens, device):

num_inputs = num_outputs = vocab_size

def normal(shape):

return torch.randn(size=shape, device=device) * 0.01

# 隐藏层参数

W_xh = normal((num_inputs, num_hiddens))

W_hh = normal((num_hiddens, num_hiddens))

b_h = torch.zeros(num_hiddens, device=device)

# 输出层参数

W_hq = normal((num_hiddens, num_outputs))

b_q = torch.zeros(num_outputs, device=device)

# 附加梯度

params = [W_xh, W_hh, b_h, W_hq, b_q]

for param in params:

param.requires_grad_(True)

return params

# 初始化隐藏状态

def init_rnn_state(batch_size, num_hiddens, device):

return (torch.zeros((batch_size, num_hiddens), device=device),)

# 定义如何再一个时间步内计算隐藏状态和输出

def rnn(inputs, state, params):

"""

实现一个简单的RNN层。

参数:

- inputs: 输入序列的张量,形状为(时间步数,批量大小,词表大小)。

- state: RNN的初始状态,是一个张量,形状为(批量大小,隐藏层大小)。

- params: 包含所有需要的参数的元组,其中:

- W_xh: 从输入到隐藏层的权重矩阵。

- W_hh: 从隐藏层到隐藏层的权重矩阵。

- b_h: 隐藏层的偏置项。

- W_hq: 从隐藏层到输出的权重矩阵。

- b_q: 输出的偏置项。

返回:

- outputs: 序列化输出的张量,形状为(时间步数,批量大小,输出大小)。

- state: 更新后的隐藏状态。

"""

# 解包参数

W_xh, W_hh, b_h, W_hq, b_q = params

# 解包初始状态

H, = state

outputs = []

# 遍历输入序列的每个时间步

for X in inputs:

# 计算隐藏状态

H = torch.tanh(torch.mm(X, W_xh)

+ torch.mm(H, W_hh)

+ b_h)

# 计算输出

Y = torch.mm(H, W_hq) + b_q

outputs.append(Y)

# 将所有时间步的输出连接起来

return torch.cat(outputs, dim=0), (H,)

class RNNModelWScratch:

def __init__(self, vocab_size, num_hiddens, device,

get_params, init_state, forward_fn):

self.vocab_size, self.num_hiddens = vocab_size, num_hiddens

self.params = get_params(vocab_size, num_hiddens, device)

self.init_state, self.forward_fn = init_state, forward_fn

def __call__(self, X, state):

X = F.one_hot(X.T, self.vocab_size).type(torch.float32)

return self.forward_fn(X, state, self.params)

def begin_state(self, batch_size, device):

return self.init_state(batch_size, self.num_hiddens, device)

# 检查输出是否具有正确的形状

num_hiddens = 512

net = RNNModelWScratch(len(vocab), num_hiddens, d2l.try_gpu(), get_params,

init_rnn_state, rnn)

state = net.begin_state(X.shape[0], d2l.try_gpu())

Y, new_state = net(X.to(d2l.try_gpu()), state)

print(Y.shape, len(new_state), new_state[0].shape)

def predict_ch8(prefix, num_preds, net, vocab, device):

"""

在prefix后面生成新字符。

"""

state = net.begin_state(batch_size=1, device=device)

# 初始化隐藏状态,因为只对一个字符串做预测,所以batch_size = 1

outputs = [vocab[prefix[0]]] # 将字符串的第一个字符转换为索引

get_input = lambda: torch.tensor([outputs[-1]], device=device).reshape((1, 1))

for y in prefix[1:]: # 预热期

_, state = net(get_input(), state)

outputs.append(vocab[y])

for _ in range(num_preds): # 预测num_preds步

y, state = net(get_input(), state)

outputs.append(int(y.argmax(dim=1).reshape(1)))

return ''.join([vocab.idx_to_token[i] for i in outputs])

# 看看未训练前的效果

print(predict_ch8('time traveller ', 10, net, vocab, d2l.try_gpu()))

# 梯度裁剪

def grad_clipping(net, theta):

"""

裁剪梯度。

"""

if isinstance(net, nn.Module):

params = [p for p in net.parameters() if p.requires_grad]

else:

params = net.params

norm = torch.sqrt(sum(torch.sum((p.grad ** 2)) for p in params))

if norm > theta:

for param in params:

param.grad[:] *= theta / norm

def train_epoch_ch8(net, train_iter, loss, updater, device, use_random_iter):

'''训练网络一轮'''

state, timer = None, d2l.Timer()

metric = d2l.Accumulator(2) # 训练损失之和,词元数量

for X, Y in train_iter:

if state is None or use_random_iter:

# 在第一次迭代或使用随机抽样时初始化state

state = net.begin_state(batch_size=X.shape[0], device=device)

else:

if isinstance(net, nn.Module) and not isinstance(state, tuple):

# state对于nn.GRU是一个张量

state.detach_()

else:

# state对于nn.Module是一个元组

for s in state:

s.detach_()

y = Y.T.reshape(-1)

X, y = X.to(device), y.to(device)

y_hat, state = net(X, state)

l = loss(y_hat, y.long()).mean()

if isinstance(updater, torch.optim.Optimizer):

updater.zero_grad()

l.backward()

grad_clipping(net, 1)

updater.step()

else:

l.backward()

grad_clipping(net, 1)

updater(batch_size=1)

metric.add(l * y.numel(), y.numel())

return math.exp(metric[0] / metric[1]), metric[1] / timer.stop() # 困惑度和每秒词元数量

def train_ch8(net, train_iter, vocab, lr, num_epochs, device,

use_random_iter=False):

"""训练模型"""

loss = nn.CrossEntropyLoss()

animator = d2l.Animator(xlabel='epoch', ylabel='perplexity',

legend=['train'], xlim=[10, num_epochs])

# 初始化

if isinstance(net, nn.Module):

updater = torch.optim.SGD(net.parameters(), lr)

else:

updater = lambda batch_size: d2l.sgd(net.params, lr, batch_size)

predict = lambda prefix: predict_ch8(prefix, 50, net, vocab, device)

# 训练和预测

for epoch in range(num_epochs):

ppl, speed = train_epoch_ch8(

net, train_iter, loss, updater, device, use_random_iter)

if (epoch + 1) % 10 == 0:

print(predict('time traveller'))

animator.add(epoch + 1, [ppl])

print(f'困惑度 {ppl:.1f}, {speed:.1f} 词元/秒')

print(predict('time traveller'))

print(predict('traveller'))

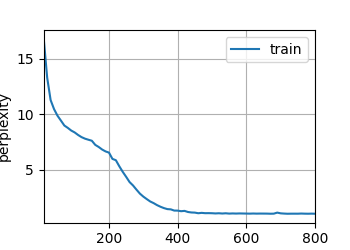

num_epochs, lr = 800, 0.5

train_ch8(net, train_iter, vocab, lr, num_epochs, d2l.try_gpu()) # 顺序分区

d2l.plt.show()

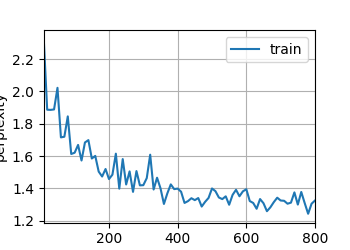

train_ch8(net, train_iter, vocab, lr, num_epochs, d2l.try_gpu(), use_random_iter=True) # 随机抽样

d2l.plt.show()

顺序分区

困惑度 1.0, 110940.8 词元/秒

time travelleryou can show black is white by argument said filby

travelleryou can show black is white by argument said filby

随机抽样

困惑度 1.3, 81862.0 词元/秒

time traveller held in his hand was a glitteringmetallic framewo

travellerit s against reason said filbywhat space as our ma

© 版权声明

版权声明

- 1本网站名称:MuQYY

- 2本站永久网址:www.muqyy.top

- 3本网站的文章部分内容可能来源于网络,仅供大家学习与参考,如有侵权,请联系站长 微信:bwj-1215 进行删除处理。

- 4本站一切资源不代表本站立场,并不代表本站赞同其观点和对其真实性负责。

- 5本站一律禁止以任何方式发布或转载任何违法的相关信息,访客发现请向站长举报

- 6本站资源大多存储在云盘,如发现链接失效,请联系我们我们会在第一时间更新。

THE END

暂无评论内容